Frosty Consulting has a client who wants to be able to load data from a stage in a manual but dynamic fashion. To be more specific they want to be able to:

- execute a single command (stored procedure)

- do so manually, meaning it won’t be scheduled and there won’t be any Snowpipes

- dynamically determine the warehouse size, if a file is over 10KB they want to use a small warehouse, anything under that size should be handled by an xsmall warehouse.

Click to see the skeleton script

-- Create the warehouses

create warehouse if not exists my_xsmall_wh

with warehouse_size = XSMALL

auto_suspend = 120;

create warehouse if not exists my_small_wh

with warehouse_size = SMALL

auto_suspend = 120;

-- Create the table

create or replace table <table_name>

(

date_time datetime,

trans_amount double

);

-- Create the stage

create or replace stage week_10_frosty_stage

url = 's3://frostyfridaychallenges/challenge_10/'

file_format = <enter_file_format>;

-- Create the stored procedure

create or replace procedure dynamic_warehouse_data_load(stage_name string, table_name string)

<code goes here>

-- Call the stored procedure.

call dynamic_warehouse_data_load('week_10_frosty_friday_stage', '<table_name>');Use the above skeleton script, and add the stored procedure.

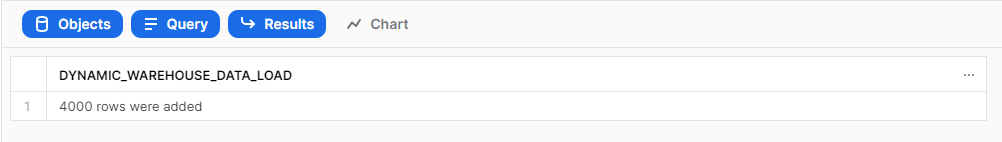

RESULT

When you execute the last line of the above script “call dynamic_warehouse_data_load()” then you should get the following result.

Remember if you want to participate:

- Sign up as a member of Frosty Friday. You can do this by clicking on the sidebar, and then going to ‘REGISTER‘ (note joining our mailing list does not give you a Frosty Friday account)

- Post your code to GitHub and make it publicly available (Check out our guide if you don’t know how to here)

- Post the URL in the comments of the challenge.

15 responses to “Week 10 – Hard”

-

This was a frustrating challenge as I discovered a limitation with Python Stored Procedures. The requirement was to change warehouses based on the file size, and Python Stored Procedures currently do not support changing warehouse or USE WAREHOUSE commands.

I only discovered this limitation when I had nearly finished the challenge. Instead of rewriting my solution in another language, I simply tweaked the challenge to resize the warehouse instead.

Solution URL:

https://github.com/ChrisHastieIW/Frosty-Friday/tree/main/Week%2010%20-%20Hard%20-%20Stored%20ProceduresOn the plus side, this challenge timed very well for me as I’d just published my blog on Python Stored Procedures in the Snowflake UI, so I was already familiar with the topic!

Link to blog for interested parties:

https://interworks.com/blog/2022/08/16/a-definitive-guide-to-python-stored-procedures-in-the-snowflake-ui/ -

You may find this useful. It goes a bit beyond this requirement as it copies data from one db table (atm, oracle, other dbs can be included) to snowflake. steps are:- connections, create table in snowflake, export data to files of 250Mb zipped, load to internal stage, copy from stage to snowflake, all in a single command. The dynamic sizing of VWH is not present but would be simple to implement. https://snowflakecomputing.atlassian.net/wiki/spaces/~5da9bcbde954f00c2353dc5f/pages/2496922407/OracleToSnowflakeCopy

-

https://github.com/sachingithubaccnt/Frosty_Friday/blob/main/Week10_Hard

I tried to implement this with JavaScript procedure. Also i created two tables, Small and Big and based on the file size and warehouse respective tables would be populated- Solution URL – https://github.com/sachingithubaccnt/Frosty_Friday/blob/main/Week10_Hard

-

Hi Ian,

You solution seems quite interesting and I wanted to view the steps performed. When I tried to access the solution it is reporting me error.

Could you please help me to access the same.

Mail id : sachin.mittal04@gmail.com-

Please let me know your github login and i can grant you access.

-

-

-

I seem to have gone down quite a different route to ChrisHastie has gone, with mine being a lot more basic in terms of functionality. I didn’t come across the limitation of not being able to USE WAREHOUSE in a python stored procedure. I found I just needed to set the collect to a variable, even thought I wasn’t going to use it.

Good one to get started with, but mine can definitely be improved functionality wise.

- Solution URL – https://github.com/ChrisBBiztory/FrostyFriday/blob/main/Week_10.sql

-

I did this with the long-waited sql-based stored procedures, nice challenge to learn how to interact with files in stage from the stored procedure.

- Solution URL – https://github.com/pekka-kanerva/frosty-friday/blob/main/week10/ff-week10-solution.sql

-

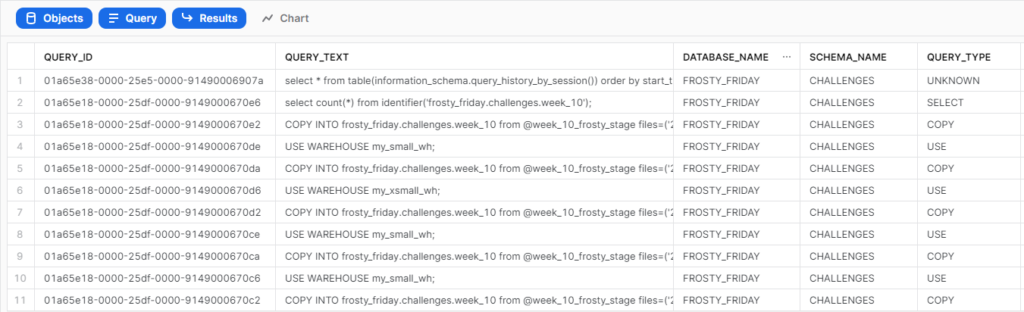

Using a directory table and creating an array list of files to run Copy once for all files for each warehouse.

Also using LOAD_HISTORY to get number of records captured.

Using timestamp to get the row count.- Solution URL – https://github.com/ankitsr92/Ankit_Work/blob/main/FrostyFriday/Week1/Week10.sql

-

well, it works, but kind of messy

https://github.com/dsmdavid/frostyfridays-sf/blob/main/macros/init_for_challenge_10.sql -

Challenge 10 Blog: https://theinformationlab.nl/en/2022/11/15/snowflake-stored-procedures/

- Solution URL – https://github.com/Atzmonky/snowflake/blob/main/ch10_Stored_procedures.sql

-

The hardest one I’ve ever done, copied structure form Atzmonky’s work. Definitely need more practice.

- Solution URL – https://github.com/zlzlzl2-data/FrostyFriday/blob/main/FF10_LZ.sql

-

Love a stored proc challenge. In hindsight I wonder if I could have done this more efficiently as I ended up using 2 cursors to loop over seperate resultsets.

- Solution URL – https://github.com/jameskalfox/frosty-friday-snowflake-challenges/blob/main/Week_10.sql

-

I’d like to expand on ChrisHastie’s findings about Python procedures,

1. You can’t run “USE” to change context or warehouse

2. You are not allowed to create another session in a python procedure.I think I explored all the ways to change the warehouse here so I followed his solution which is to alter the warehouse size instead.

- Solution URL – https://github.com/darylkit/Frosty_Friday/blob/main/Week%2010%20-%20Stored%20Procedures/stored_procedures.sql

-

先日、Previewになった Snowflake Notebookを使って回答してみました

COPY INTOのinclude_metadataオプションを使い、メタデータを取り込む工夫をしてみました

—–

I recently tried using the Snowflake Notebook, which became available in Preview the other day. I experimented with using the include_metadata option in COPY INTO to incorporate metadata.- Solution URL – https://github.com/gakut12/Frosty-Friday/tree/main/week10_hard_stored_procedure

Leave a Reply

You must be logged in to post a comment.